The Forbidden Psychology of AI Persuasion: What Machiavelli Knew That Your Competitors Don't

This information can be used for good or evil. I'm trusting you to choose correctly.

I need to be careful with what I'm about to share.

The techniques in this article have been used to topple governments, build cults, start movements, and generate billions of dollars. They work whether you're selling salvation or snake oil. Whether you're helping people or exploiting them.

I'm going to teach them to you anyway.

Not because I think everyone should have this knowledge. Honestly, I wish some people didn't. But the reality is that bad actors already know this stuff. They've known it for decades. They use it every day in political campaigns, predatory marketing, and psychological operations designed to separate people from their money, their votes, and their sense of reality.

The only defense against manipulation is understanding manipulation.

And the only way to compete against those who use these techniques unethically is to use them ethically, better.

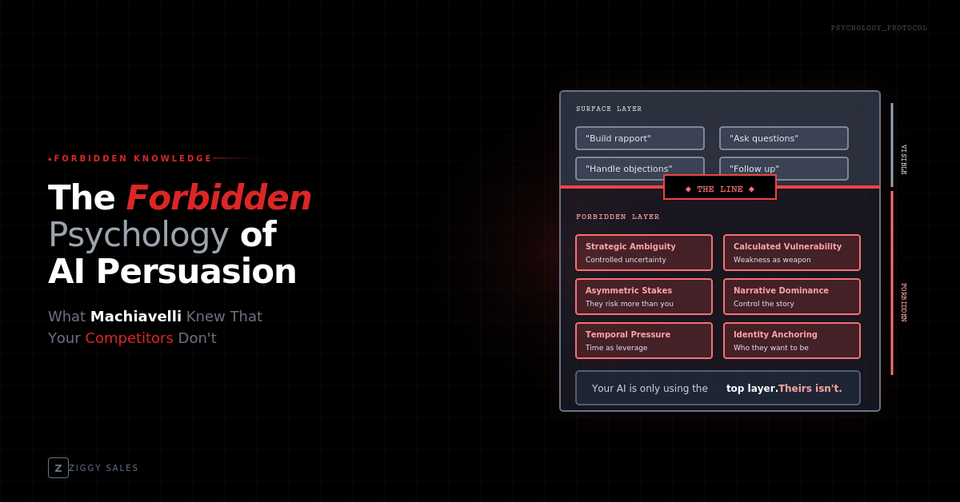

So consider this your entrance into a conversation that's been happening in back rooms for centuries. What I'm about to show you is how AI can be weaponized using psychological principles most people don't know exist. Principles derived from Machiavelli, refined through propaganda research, and now deployable at scale through technology that didn't exist five years ago.

Use it to help people make decisions that genuinely serve them. Use it to guide qualified prospects toward solutions they actually need. Use it to filter out the people you can't help before you waste their time.

Or close this tab right now.

Because once you see how this works, you can't unsee it. And you'll start recognizing it everywhere.

Who Was Machiavelli and Why Should You Be Slightly Uncomfortable Right Now

Most people know Machiavelli as a synonym for scheming villainy. The guy who said the ends justify the means. The patron saint of political manipulation.

That reputation isn't entirely wrong. But it misses the point.

Niccolò Machiavelli was a 15th-century Florentine diplomat who spent his career watching how power actually worked. Not how philosophers said it should work. Not how religious leaders preached it should work. How it actually worked, in practice, when real stakes were on the table.

He watched principled leaders get destroyed by ruthless ones. He watched honest rulers get outmaneuvered by liars. He watched idealists build beautiful systems that collapsed the moment someone willing to break the rules showed up.

And then he wrote it all down.

"The Prince" wasn't a celebration of evil. It was a field guide to reality. Machiavelli was essentially saying: "Here's how the game is actually played. You can pretend otherwise if you want. But you'll lose."

The book was so honest about human nature that the Catholic Church banned it for 200 years. His name became an insult. "Machiavellian" meant cunning, amoral, dangerous.

But here's what his critics never addressed: he was right.

The techniques he described worked. They still work. They work whether you're a Renaissance prince or a modern marketer. Whether you're running a political campaign or a sales funnel.

The question was never "do these techniques work?"

The question was always "who's using them, and for what purpose?"

The Propaganda Playbook Hidden Inside AI

What I'm about to describe comes from the intersection of three bodies of knowledge that most business owners have never been exposed to:

Classical rhetoric and persuasion. The Greeks and Romans spent centuries codifying how language influences belief and action. Aristotle's "Rhetoric." Cicero's speeches. This knowledge never disappeared. It just moved into specialized fields.

20th-century propaganda research. After two world wars demonstrated the terrifying power of mass persuasion, governments and academics studied it obsessively. How did Hitler rise? How did wartime messaging mobilize populations? What makes people believe things that aren't true? This research is publicly available. Most people have never read it.

Modern behavioral psychology and NLP. Neuro-linguistic programming, behavioral economics, cognitive bias research. The science of how humans actually make decisions versus how we think we make decisions. This field has exploded in the last 30 years.

Combine these three bodies of knowledge with AI that can process thousands of conversations simultaneously, adapt in real-time, and execute at scale without fatigue or inconsistency.

That's what I'm about to teach you.

And I need you to understand: this is not neutral information. It's not like learning Excel or project management. This is knowledge that changes your relationship to every communication you encounter for the rest of your life.

Last chance to close the tab.

Principle 1: The Art of Psychological Reconnaissance

Machiavelli wrote: "A wise man ought always to follow the paths beaten by great men, and to imitate those who have been supreme."

Translation: study what works, then apply it. Don't theorize. Observe and replicate.

The first principle of ethical manipulation is reconnaissance. You cannot influence someone you don't understand. And most businesses understand their prospects at such a superficial level that their attempts at persuasion are laughably ineffective.

What propaganda experts know:

Every human being broadcasts psychological information constantly. Word choice reveals education level, emotional state, and decision-making style. Sentence structure reveals thinking patterns. Questions reveal fears and desires. Response timing reveals engagement level and competing priorities.

Skilled interrogators, hostage negotiators, and intelligence officers are trained to read these signals. They can tell within minutes whether someone is lying, what they're afraid of, what they want, and what leverage points exist.

Most salespeople are functionally deaf to this information. They're so focused on what they want to say that they miss what the prospect is telling them.

What AI makes possible:

AI can process every word a prospect has ever sent you. Every email. Every text. Every form submission. Every chat interaction. It can analyze patterns across thousands of conversations and identify psychological indicators that predict behavior.

This person uses tentative language and asks permission-seeking questions. They need reassurance and social proof. They're terrified of making a wrong decision.

This person uses assertive language and challenges your claims. They need to feel like they've won. They'll resist anything that feels like being sold.

This person asks detailed technical questions and never discusses feelings. They need data. Emotional appeals will backfire.

Human salespeople might eventually figure this out after several conversations. AI can identify it from the first message and adapt every subsequent interaction accordingly.

The ethical application:

Use this to serve people better. The prospect who needs reassurance deserves to have their fears addressed, not steamrolled. The prospect who needs to feel in control deserves a process that puts them in the driver's seat. The prospect who needs data deserves analysis, not hype.

Reading people isn't inherently manipulative. It's the foundation of empathy. The difference between manipulation and service is whether you use what you learn to help them or exploit them.

The dark version (what bad actors do):

Predatory salespeople use psychological reconnaissance to identify vulnerabilities and pressure points. They find the anxious person and amplify their anxiety. They find the ego-driven person and flatter them into bad decisions. They find the confused person and overwhelm them with complexity until they surrender.

You'll encounter competitors who do this. You'll lose some deals to them in the short term. You'll build something sustainable while they burn through customers who eventually realize they were exploited.

Principle 2: The Architecture of Manufactured Consent

Machiavelli wrote: "Men judge generally more by the eye than by the hand, because it belongs to everybody to see you, to few to come in touch with you."

Translation: perception is reality. Control what people see and you control what they believe.

This is the principle that makes people uncomfortable. Because it sounds like lying.

It's not. It's stagecraft.

What propaganda experts know:

Every interaction is a performance. Not in the sense of being fake, but in the sense of being deliberate. The setting, the timing, the sequence of information, the visual presentation, the tone of voice. All of these shape perception before a single substantive word is exchanged.

Political campaigns understand this deeply. The backdrop behind a candidate. The music at a rally. The camera angles in an ad. None of this is accidental. Every element is chosen to create a specific psychological impression.

Most businesses leave these elements to chance. Their AI responds with generic language. Their emails look like every other email. Their sales process feels like every other sales process. They've surrendered the entire perception battleground.

What AI makes possible:

AI can manufacture perception at scale with precision that humans can't match.

Response timing is a perception lever. Instant response signals eagerness. Too instant signals desperation or automation. Strategic timing (fast but not robotic) signals competence and appropriate attention. AI can calibrate this precisely.

Language patterns are a perception lever. "Thanks for reaching out! How can I help?" reads as junior, generic, powerless. "I've reviewed your situation. There are three specific things we should discuss." reads as senior, prepared, authoritative. Same underlying function. Radically different perception.

Information sequencing is a perception lever. Leading with credentials signals insecurity. Demonstrating competence first, then mentioning credentials only when relevant, signals earned confidence. AI can control this sequence across every interaction.

The ethical application:

Manufacture the perception of what you actually are. If you're competent, communicate competently. If you're prepared, demonstrate preparation. If you're authoritative, speak with authority.

This isn't creating false impressions. It's refusing to let true impressions get lost in amateur communication.

Most businesses are better than they appear. Their expertise gets buried in generic language. Their preparation gets obscured by poor presentation. Their authority gets undermined by desperate-sounding follow-ups.

Manufacturing accurate perception is a service to prospects who would otherwise misjudge you.

The dark version (what bad actors do):

Scammers manufacture perception of legitimacy they don't possess. They create professional-looking websites for businesses that don't exist. They use language patterns that signal competence they lack. They build trust they intend to betray.

The technique is identical. The intent is opposite.

You'll be tempted, sometimes, to manufacture perceptions that exceed reality. To imply capabilities you haven't proven. To suggest experience you haven't accumulated. Don't. The short-term gains aren't worth the long-term collapse when reality fails to match the impression you created.

Principle 3: The Sequence of Controlled Revelation

Machiavelli wrote: "A prince ought to have no other aim or thought, nor select anything else for his study, than war and its rules and discipline."

Translation: master your domain completely. Know it so deeply that you can deploy any piece of it at exactly the right moment.

This principle governs timing. When you reveal information matters as much as what information you reveal.

What propaganda experts know:

Information has a half-life. The same fact lands differently depending on when it's introduced.

Tell someone your product costs $10,000 in your first sentence and they'll anchor on price, evaluating everything else through the lens of "is this worth $10,000?"

Tell someone your product costs $10,000 after they've articulated their problem, understood the cost of not solving it, and seen evidence that your solution works. Now $10,000 is evaluated against the cost of inaction, not in a vacuum.

Same number. Different sequence. Radically different psychological response.

Political operatives call this "oppo drops." You don't release damaging information about an opponent randomly. You hold it. You wait. You deploy it at the moment when it will cause maximum damage and the opponent has minimum time to respond.

What AI makes possible:

AI can execute controlled revelation across hundreds of simultaneous conversations, each calibrated to where that specific prospect is in their psychological journey.

Social proof drops when skepticism appears. Not in the opening pitch. At the moment doubt surfaces, suddenly a case study from their exact industry appears. "Actually, we just worked with a company almost identical to yours. Here's what happened."

ROI data drops when price resistance appears. Not preemptively. At the moment they push back on cost, suddenly specific numbers appear. "Companies in your situation typically see payback within 90 days. Here's the breakdown."

Urgency indicators drop when decision paralysis appears. Not as manipulation. As genuine information. "I should mention that we can only onboard four new clients this month and two spots are already committed." But only when it's true. And only when they need a reason to stop deliberating and decide.

The ethical application:

Controlled revelation serves people by giving them the right information at the right time to make good decisions. It prevents overwhelm. It addresses concerns as they arise rather than burying prospects in data they're not ready to process.

Think about good teaching. A good teacher doesn't dump the entire curriculum on day one. They sequence information so each piece builds on the last, introduced at the moment the student is ready to receive it.

This is the same principle applied to sales communication.

The dark version (what bad actors do):

Bad actors use controlled revelation to hide information people need. They bury the price until after emotional commitment is established. They conceal negative reviews. They sequence information to create false impressions that would collapse if all facts were revealed simultaneously.

Controlled revelation becomes unethical when you're controlling it to hide rather than to clarify. Ask yourself: if this person had all the information at once, would they make the same decision? If yes, you're just sequencing for comprehension. If no, you're concealing to manipulate.

Principle 4: The Psychology of Strategic Withdrawal

Machiavelli wrote: "Never was anything great achieved without danger."

Translation: risk is unavoidable. The only question is whether you're choosing your risks strategically.

The counterintuitive truth about persuasion is that the most powerful move is often the one that looks like retreat.

What propaganda experts know:

Reactance is one of the most powerful forces in human psychology. When people feel pushed, they push back. When they feel their freedom is threatened, they assert it. The harder you try to convince someone, the more they resist being convinced.

This is why desperate salespeople fail. Their desperation creates pressure. Pressure triggers reactance. Reactance kills deals.

The antidote is strategic withdrawal. Creating space. Demonstrating that you don't need them more than they need you. Positioning yourself as the prize rather than the pursuer.

Cults understand this. They don't chase recruits. They make recruits pursue membership. They create barriers. They disqualify people. They make belonging feel earned rather than purchased.

What AI makes possible:

AI can execute strategic withdrawal at scale without the emotional difficulty humans experience.

Disqualification as attraction. "Based on what you've shared, I'm not sure we're the right fit. Our solution works best for companies that [specific criteria]. Is that your situation, or should I point you toward something else?"

Watch what happens psychologically. Suddenly you're not a salesperson chasing them. You're a gatekeeper they need to get past. The power dynamic inverts completely.

Silence as strategy. Humans hate silence. We fill it. We follow up compulsively. We interpret non-response as rejection and try harder, which triggers more reactance.

AI can let silence breathe. It can wait. It can allow prospects space to come to their own conclusions without pressure. Then re-engage at exactly the right moment, when the prospect's psychology has shifted from resistance to receptivity.

Scarcity without desperation. "We're currently working with about four clients in your industry. If that's something you're interested in exploring, I can check availability. If not, no worries either way."

Compare that to: "We have limited spots available! Book now before they're gone!" One triggers reactance. The other creates genuine consideration.

The ethical application:

Strategic withdrawal is ethical when you're genuinely willing to walk away. When you actually don't need every deal. When you're actually selective about who you work with.

It becomes unethical when it's fake scarcity. When you're pretending to disqualify while desperately hoping they push through. When the "limited availability" is manufactured urgency.

Real strategic withdrawal requires having something worth withdrawing. If your business is desperate for every lead, you can't authentically play this game. Fix your pipeline first. Then you can genuinely operate from abundance.

The dark version (what bad actors do):

Pickup artists and social engineers use strategic withdrawal to create artificial desirability. They manufacture scarcity that doesn't exist. They fake disinterest to trigger pursuit. They weaponize reactance against people who don't realize they're being played.

The technique works regardless of intent. The difference is whether you're actually selective (ethical) or performing selectivity to manipulate (unethical).

Principle 5: The Compounding Effect of Micro-Commitments

Machiavelli wrote: "Men are so simple and so much inclined to obey immediate needs that a deceiver will never lack victims for his deceptions."

Read that quote carefully. Machiavelli is describing a vulnerability in human psychology. He's not celebrating it. He's observing it.

The vulnerability is this: humans are terrible at long-term thinking and excellent at rationalizing incremental steps.

What propaganda experts know:

Large requests trigger deliberation. Small requests trigger compliance.

This is the foot-in-the-door technique, documented extensively in psychological research. Once someone has said yes to a small request, they're dramatically more likely to say yes to a larger one. Not because the large request has become more attractive, but because they've already begun to see themselves as someone who says yes to you.

Each micro-commitment creates identity. "I'm the kind of person who responds to their emails." "I'm the kind of person who downloads their resources." "I'm the kind of person who books calls with them." By the time a significant decision arrives, the prospect has already built an identity around engagement with you.

Political movements use this relentlessly. They don't start with "storm the capitol." They start with "sign this petition." Then "attend this rally." Then "donate $5." Each step is tiny. Each step builds identity. Each step prepares the ground for the next.

What AI makes possible:

AI can engineer micro-commitment sequences at scale, moving hundreds of prospects through identity-building progressions simultaneously.

The first commitment is nearly frictionless. Reply to this message. Click this link. Answer this question. Something so small that non-compliance feels more awkward than compliance.

Each subsequent commitment is marginally larger. Download this resource. Schedule a brief call. Complete this assessment. Fill out this application.

By the time they reach a significant decision (purchasing, signing a contract), they've made dozens of micro-commitments. They've built an identity as someone engaged with your solution. The major commitment becomes consistent with who they've already demonstrated themselves to be.

The ethical application:

Micro-commitment sequences are ethical when each step provides genuine value. The resource they download actually helps them. The assessment actually clarifies their situation. The brief call actually answers their questions.

You're not tricking them into escalating engagement. You're creating a journey where each step serves them AND moves them closer to a decision that also serves them.

The test: if they stopped at any point, would they feel they received value? If yes, your sequence is ethical. If they'd feel tricked or used, it's not.

The dark version (what bad actors do):

Predatory commitments start small and escalate into exploitation. "Just give us your email." Then credit card for a "free trial" that auto-renews. Then cancellation that requires a phone call. Then a phone call designed to pressure you into staying.

Each step seemed small. Each step was calculated to extract more while providing less. The sequence was designed to capture, not serve.

You'll be tempted to optimize your sequences purely for conversion. More aggressive asks. Faster escalation. Friction that locks people in rather than serving them. Resist. The short-term gains aren't worth the reputation destruction.

Principle 6: The Unseen Hand That Guides All Paths

Machiavelli wrote: "The wise man does at once what the fool does finally."

This final principle ties everything together.

Machiavelli's ultimate insight wasn't about individual techniques. It was about systems. The truly powerful don't personally manipulate every outcome. They build systems that produce desired outcomes automatically, invisibly, at scale.

What propaganda experts know:

The most effective influence doesn't feel like influence. It feels like the natural order of things.

Think about how you form opinions about products, politicians, ideas. You believe you're thinking independently. You believe you're evaluating evidence rationally. You believe you're making free choices.

But the evidence you're evaluating was selected by someone. The options you're choosing between were framed by someone. The context in which you're making decisions was shaped by someone.

The most effective propagandists don't tell you what to think. They construct the environment in which you do your thinking. They make certain conclusions feel obvious, inevitable, natural.

You never see the hand because the hand shapes everything before you start looking.

What AI makes possible:

This is where everything we've discussed converges.

Imagine an AI system that:

- Conducts psychological reconnaissance on every lead automatically

- Manufactures appropriate perception through calibrated language and timing

- Executes controlled revelation, deploying information at psychologically optimal moments

- Applies strategic withdrawal, creating space and inverting power dynamics

- Engineers micro-commitment sequences that build engagement identity

- Does all of this simultaneously across hundreds of conversations

- Adapts in real-time based on each prospect's responses

- Operates 24/7 without fatigue, inconsistency, or emotional interference

Your prospects would move through a journey that feels natural. They'd feel like they're making independent decisions. They'd feel like they're being served, not sold.

Because they are being served. The system is designed to guide qualified prospects toward solutions that genuinely help them while filtering out those you can't serve.

But the journey wouldn't be accidental. Every touchpoint would be architected. Every interaction would be calibrated. Every response would be optimized against decades of research into how humans actually make decisions.

The ethical application:

Build this system to guide people toward good outcomes. Structure the invisible hand so that following its guidance leads to decisions prospects won't regret.

The test is simple: if your prospects understood exactly how the system worked, would they still want to go through it? If yes, you've built something ethical. If they'd feel deceived or manipulated, you haven't.

Transparency-compatibility. That's the ethical standard. Build systems that remain ethical even if the mechanism is revealed.

The dark version (what bad actors do):

Dark systems are designed to exploit the invisible hand. To guide people toward decisions that serve the system operator at the expense of the person being guided. To extract maximum value while delivering minimum.

These systems are everywhere. Social media algorithms that maximize engagement by maximizing outrage. Political operations that polarize populations for electoral advantage. Marketing funnels that convert people into products they don't need.

The difference between your system and theirs must be purpose. Same techniques. Opposite intent.

The Weight of What You Now Know

I told you at the beginning that this information changes your relationship to communication permanently.

You're going to start seeing these techniques everywhere. In political ads. In marketing funnels. In sales conversations. In negotiations. In social interactions.

You're going to recognize manufactured perception. You're going to notice controlled revelation. You're going to feel micro-commitment sequences trying to build your compliance identity.

Some of you will be disturbed by how much of what you thought was organic is actually engineered.

Good. That awareness is the first line of defense.

And now you have a choice.

You can use these techniques to help people. To guide qualified prospects toward solutions that genuinely serve them. To structure journeys that create value at every step. To build systems that remain ethical even when the mechanism is exposed.

Or you can use them to exploit. To guide people toward decisions that benefit you at their expense. To obscure rather than clarify. To extract rather than serve.

The techniques don't care. They work either way.

Machiavelli understood this. His critics wanted him to pretend that power operates on moral principles. He refused. He said: here's how power actually works. What you do with that knowledge is your choice.

I'm making the same offer.

Here's how psychological influence actually works at AI scale. The people competing against you already know this, or they're learning it. The question isn't whether these techniques will be deployed in your market. They will be.

The question is whether they'll be deployed by people who want to help or people who want to exploit.

I'm betting on you.

Don't make me regret it.

Ziggy builds AI systems that apply these principles ethically at scale. The 80% of AI implementations that fail do so because they deploy technology without psychology. They automate responses without understanding influence. They scale volume without scaling intelligence.

We build the invisible hand. We build it to guide people toward genuine solutions. We build it to remain ethical even when the mechanism is understood.

If you're ready to deploy AI that actually understands how humans make decisions, let's talk.

If you're planning to use this information to exploit people, delete this article and forget you read it. We're not interested.