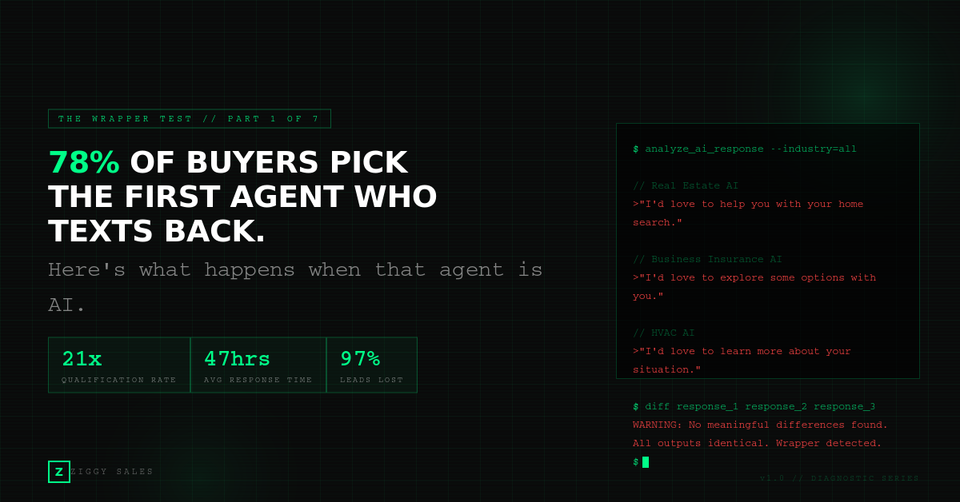

78% of Buyers Pick the First Agent Who Texts Back. Here's What Happens When That Agent Is AI (and It's Terrible).

Pt. 1 of 7 - Understanding Wrappers vs AI Employee's

TLDR: Why You're Reading This

You're either using AI to follow up with leads right now, or you're about to. Either way, something isn't converting the way it should. This 5-minute read will show you exactly where the breakdown is happening, and it isn't where you think.

- Leads are 21x more likely to qualify within 5 minutes. The average response time across service businesses: 47 hours.

- AI wrappers respond fast but sound identical across every industry. Speed without quality is speed to alienation.

- AI-native products retain at 40% vs. 82% for traditional SaaS. Three out of four customers cancel within a year.

- The revenue difference between wrapper AI and human-quality AI follow-up on the same business insurance leads: $9,600/mo vs. $33,600/mo.

- Your action: Take the 5-question Wrapper Test at the bottom. Score your current system honestly.

Three Industries. Three Prospects. The Exact Same Message.

Here's what a real estate lead gets from an AI tool after filling out a Zillow form at 9 PM:

"Hi [First Name]! Thanks for your inquiry about [Property Address]. I'd love to help you with your home search. When would be a good time to chat?"

Here's what a business owner gets after requesting a commercial insurance quote online:

"Thanks for your interest in coverage options! I'd be happy to help you explore plans that fit your needs. What's a good time to connect?"

Here's what a homeowner gets after submitting a form for an HVAC repair estimate:

"Hi! We received your information about your service request. We'd love to learn more and see if we can help. When are you available for a quick call?"

Three different industries. Three different prospects. The exact same message, wearing a different hat.

Every single one of them sounds like what it is: a form-triggered automation pretending to be a person.

Your prospects know. They always know.

What This Is Costing You Right Now

Before we get into why this happens, let's talk about what it costs. The math is ugly and it's running every month whether you look at it or not.

Real Estate Agent spending $2,000/mo on Zillow leads:

- 30 leads per month

- Average commission on a $400K home: $12,000

- With human-quality 5-minute response: 15% conversion, 4.5 closings, $54,000/mo

- With a 15-hour response time: 3% conversion, 0.9 closings, $10,800/mo

- With an instant AI response that sounds robotic? Closer to the bottom.

Business Insurance Agency at $50 CPL, 200 leads monthly:

- Each closed policy: $1,200 first-year premium

- Agencies with personalized follow-up: 12–15% conversion, ~28 policies, $33,600/mo

- Agencies running wrapper AI: 3–5% conversion, ~8 policies, $9,600/mo

- Same leads. Same spend. $24,000/mo difference.

HVAC Company running $3,000/mo in Google Local Services ads:

- 60 inbound leads per month

- Average ticket: $8,500 (full system install) or $350 (repair)

- Blended close rate with fast, personalized follow-up: 35%, ~21 jobs, $42,000+/mo

- Blended close rate with generic AI response: 15%, ~9 jobs, $18,000/mo

- That's $24,000/mo walking to the competitor who called back like a human.

Contractor, mortgage lender, financial advisor, law firm: the math works everywhere. Speed without quality is speed to alienation.

The Jeopardy Problem

The most useful analogy for what your AI is doing right now: imagine you hired the world's greatest Jeopardy contestant to handle your lead follow-up.

They've memorized every listing, every policy, every rate, every regulation. Ask them anything and they'll nail it instantly.

Now put them on the phone with a nervous first-time homebuyer who texts "I'm not sure if now is the right time."

The Jeopardy contestant responds with interest rates, median home prices, and inventory data. Perfect information. Completely useless. The buyer didn't ask for data. They asked for reassurance.

Or put them with an insurance lead who says "I'm just looking around, my current premiums went up." The Jeopardy contestant launches into plan comparisons. The lead wanted to be heard, not quoted at.

This is the wrapper problem.

A wrapper is a thin layer of interface on top of someone else's AI model, using your FAQ document as its only context. GoHighLevel, Hatch, Aloware, Textdrip: same model, same architecture, same limitations. The only variable is which FAQ got uploaded.

Every wrapper sounds the same because every wrapper is the same.

The Numbers Nobody Wants to Talk About

A 2025 analysis of 3,500 software companies found AI-native products have a median gross retention rate of 40%. Traditional B2B SaaS retains at 82%. AI chatbot platforms churn at 6–12% per month. Three out of four customers cancel within a year.

The reason cited across the research: "perceived lack of personalization and customer preference for human support."

On GoHighLevel's own community forum, users reported as recently as April 2025 that the AI "still cannot be reliably used" and "still feels like an old flow chart bot." Another user requested Gemini integration because it's "way more humane."

"Way more humane" is an extraordinary thing to say about a software product. It's an admission that the current system sounds like a machine, and customers can tell.

The Part Nobody Talks About: Why You Haven't Switched Yet

Here's the thing most articles like this skip over.

You probably already suspect your AI texting tool isn't working. You might have seen the flat response rates. You might have read conversations and cringed. You might have had a prospect tell you directly.

So why haven't you changed anything?

Because the risk of switching feels worse than the cost of staying.

What if the next tool is just as bad? What if migration breaks your pipeline for two weeks? What if you're the person who pulled the trigger on something that made things worse?

That hesitation is rational. Research on buyer behavior calls it the fear of messing up, and it's the number one reason deals stall, ahead of price, timing, and even need. People don't stay with bad solutions because they love them. They stay because the pain of a wrong choice feels sharper than the pain of the status quo.

But there's a cost to that hesitation, too. Every month you keep running a system that converts at 3–5% instead of 12–15%, you're not "playing it safe." You're paying a tax: lost revenue, wasted ad spend, and prospects who formed a permanent first impression of your business based on a robotic text they received at 9:03 PM.

The Wrapper Test below isn't asking you to switch anything. It's asking you to measure what you have, so the decision is based on evidence, not anxiety.

Take the Wrapper Test

Five questions. Sixty seconds. They'll tell you if your AI is working or just sending faster bad messages.

1. Does your AI remember conversations from last week?

A seller texts Monday about their 4-bedroom colonial. Goes quiet. Texts back Thursday about your commission structure. Does your AI know who they are?

2. When a prospect shares details, where do those details go?

They say "I have a family of four and two cars." Does your AI extract that into the lead record as structured data you can filter, sort, and act on? Or does it just keep pushing for the appointment and leave those details buried in a text thread that your sales rep has to scroll through before the call?

Most wrappers do the second one. The AI's only job is to book the call. Everything the prospect shares along the way lives and dies in the conversation log. Your rep walks into the call blind, asks the prospect to repeat themselves, and the prospect wonders why they bothered texting in the first place.

3. Does your AI text differently to different prospect types?

First-time buyer versus experienced investor. 65-year-old Medicare lead versus 35-year-old shopping term life. Pull up your last ten conversations. If they read the same, your AI is a substitute teacher reading from a script.

4. Who reviews messages before they hit your prospect's phone?

In a wrapper, nobody. If it hallucinates a listing price or invents a policy feature, the prospect sees it first. In insurance, that's a compliance issue.

5. Is one AI brain doing everything?

Qualifying, persuading, remembering, extracting data, checking its own work, adapting style? That's asking your best closer to also cold call, do data entry, and run their own QA.

Three or more "no" or "I don't know" answers?

You don't have AI. You have a wrapper. And it's texting your prospects right now.

What's Next

Part 2 goes inside the Memory Hole: what happens when your AI forgets a seller's property details mid-conversation, why insurance prospects stop responding after the third message, and the silent data corruption happening to every lead in your pipeline.

This is Part 1 of The Wrapper Test, a 7-part series on why your AI texting tools are failing and what the architectural fix looks like. New parts publish weekly.